Category: News

-

New NSF project on verifiable safety under visual shifts

We are excited to start the VISUALS project: Verifiable Information-Theoretic Safety Under Augmented Latent Shifts, in collaboration with Yuheng Bu (UCSB) and Jose Principe (UF), sponsored by the NSF EPCN program. This project aims to create an end-to-end methodology to model, analyze, quantify, detect, and adapt to changes in the visual environment of an autonomous…

-

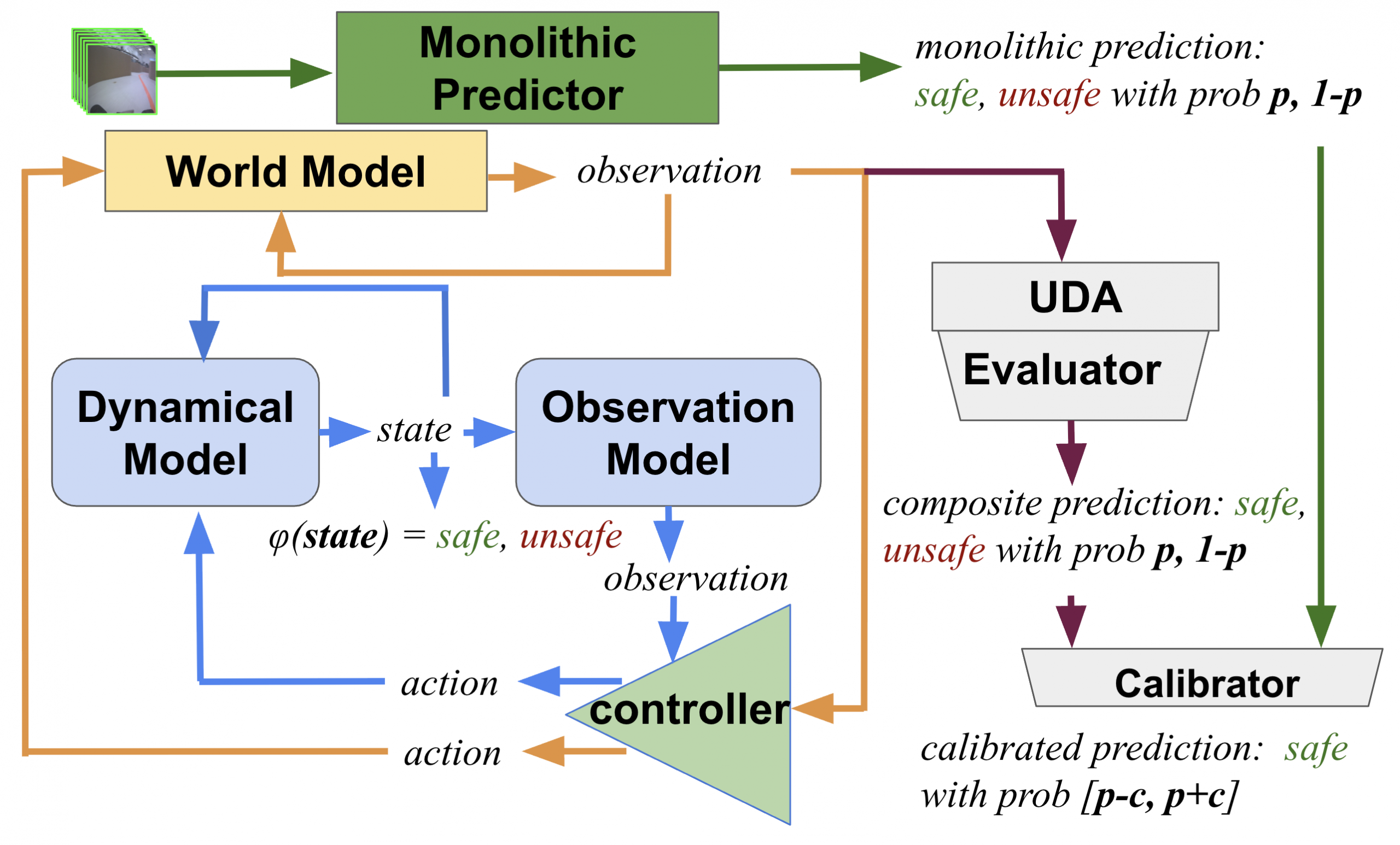

New preprint: how safe will I be given what I saw?

An extension of our modular family of learning-based safety predictors from L4DC 2024, now with transformers and quantization! Citation:

-

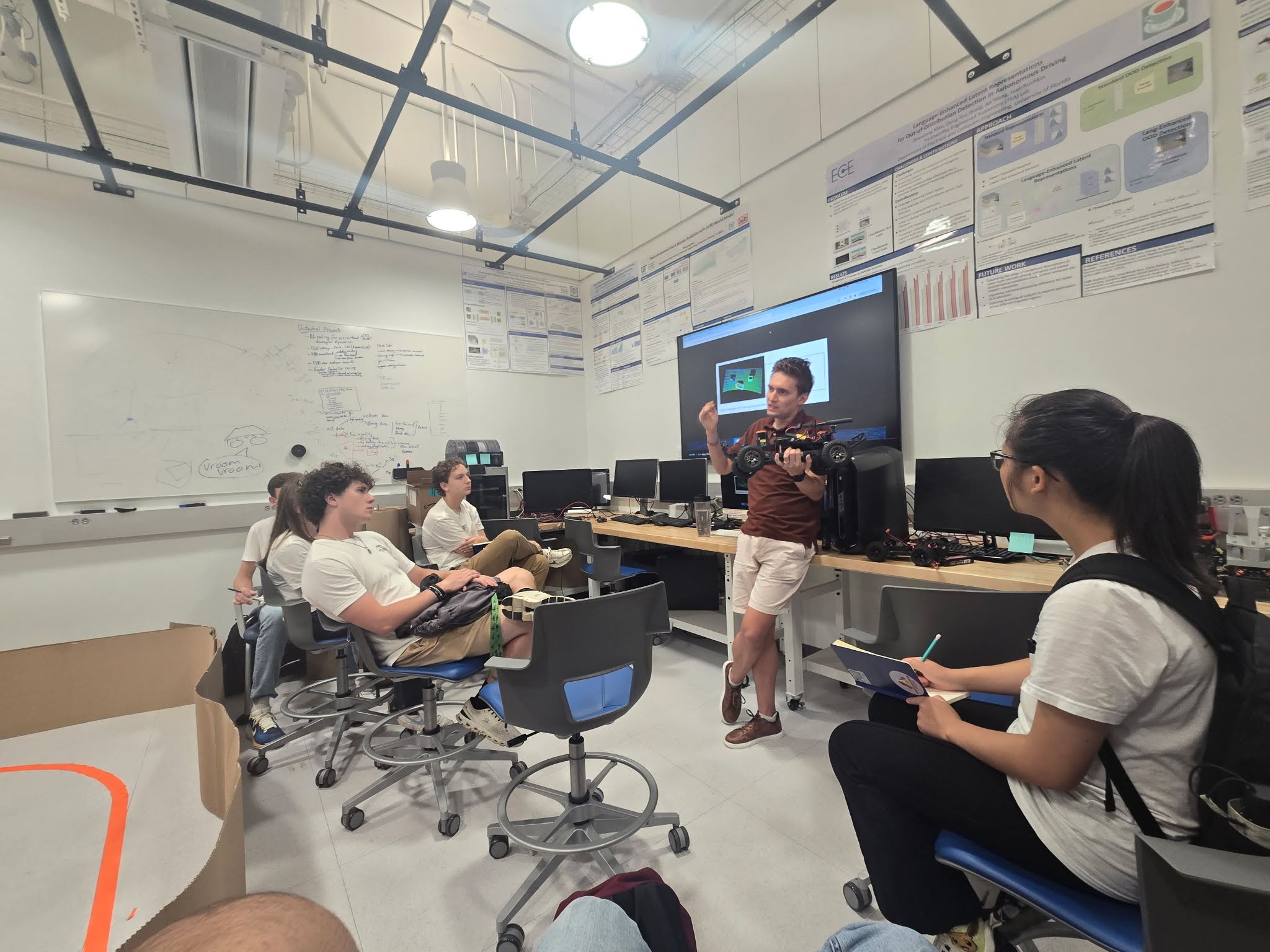

TEA Lab hosts incoming freshmen

This summer, the TEA Lab welcomed a group of incoming UF freshmen as part of the STEPUP (the Successful Transition and Enhanced Preparation for Undergraduates program). The visiting students learned about the importance of safe and trustworthy autonomy, got to poke around the autonomous racing cars, and asked great questions!

-

Ivan talks about conformal reachability at CAV

—

Ivan went all the way to Croatia to tell people how to put conformal prediction in a closed loop at the International Conference on Computer-Aided Verification (CAV). Doing so would let you verify autonomous systems with neural networks of any size (yes, even a VLA model like RT-2!). The decisive question is, to apply conformal prediction at…

-

Our world models are taking off

Our recent dive into world models is blossoming in several intriguing directions: multimodality, hallucinations, and modular verification. While we’re pushing these directions forward, take a look at a nice overview article about our research on world models.

-

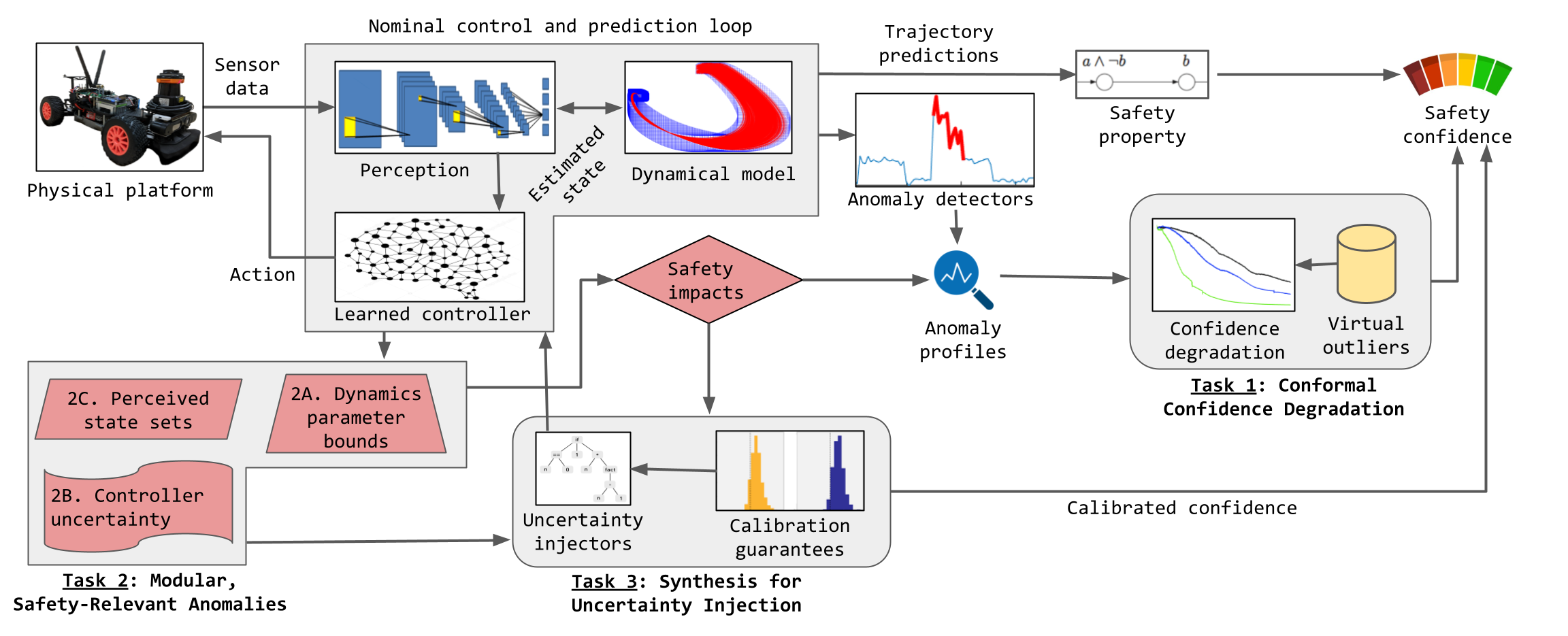

New NSF project on confidence calibration under anomalies

The last decade has seen a flourishing of detection capabilities for various anomalies and out-of-distribution samples. However, the question of what an autonomous system should do after it detects an anomaly is incredibly challenging and seems to be nowhere near a satisfying answer. A reasonable step after detecting an anomaly is to figure out, in…

-

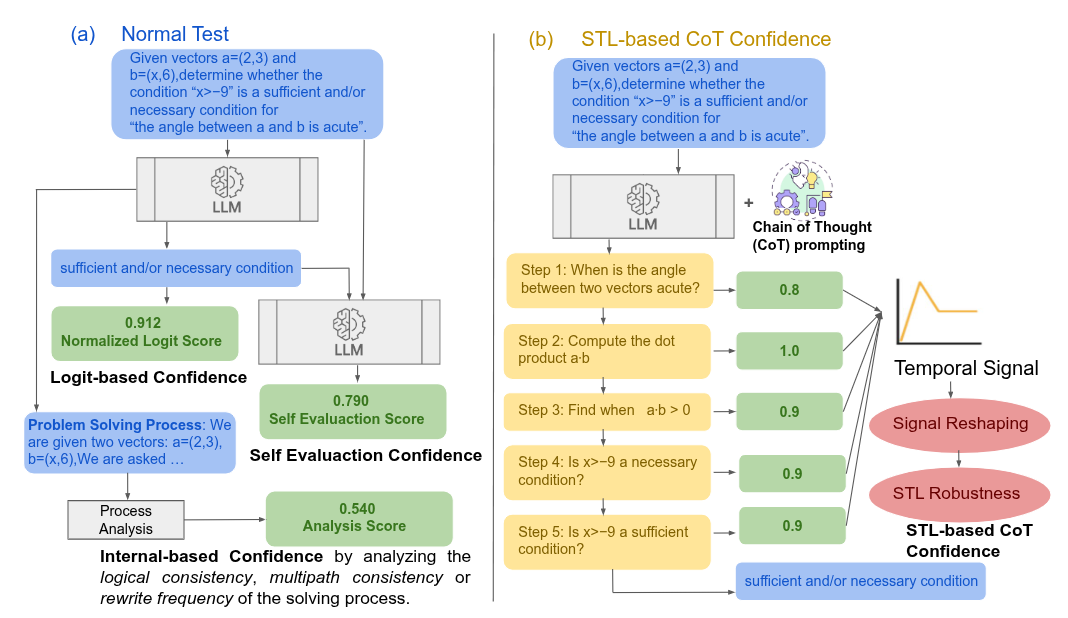

New preprint: chain-of-thought confidence with STL

We converted a SAS course project to a workshop paper about how to calibrate the confidence in chain-of-thought reasoning using a temporal logic formula: Stay tuned for more!

-

Ivan presents principles of world modeling at NeuS 2025

—

Ivan revisited his old grazing grounds in Philly to present 4 principles for making world models more physically grounded. There was an intense discussion of whether purely symbolic simulators should count as generative world models. Citation: In the meantime, the RPI collaborators Thomas and Rado presented a joint work on state-based conformal prediction. Citation:

-

Zhongzheng and Yuyang give racing demo to Nelms family

Thanks to Yuyang and Zhongzheng for impressing the visitors from the generous Nelms family on a new racing track!

-

Jordan & Ivan present world models at ICRA 2025

—

To the audience’s excitement, Jordan and Ivan presented the lab’s work on principles of physically interpretable work models in two capacities: Citation: