- Ivan receives IEEE TCCPS Early Career Award

- New preprint: anomaly-informed safety confidence

- Zhenjiang presents confidences & world models at CPS Week

- New preprint: elimination for hypothesis testing

- New preprint: TEACar Autonomous Racing Platform

- Maxwell wins Outstanding Student Leadership Award

- New preprint: a broad view of CPS resilience

- Posters at Undergraduate Spring Symposium 2026

- Mathias wins ECE Undergrad Research Excellence Award

- New preprint: latent-entropy anomaly detection

- Ivan presents LLM confidence calibration at Shonan

- Ivan named Malachowsky Family Endowed Rising Star

- New preprint: verifiable deterministic world models

- STL CoT confidence = most innovative poster

- New preprint: statistical-symbolic verification of perception

- ECE showcases new club: Gator Autonomous Racing

- New preprint: a survey of CPS assumptions

- Ivan does publicity for a neuro-symbolic conference

- Jordan presents V&V for vision-based systems at ATVA

- AutoGators win Most Innovative @ Autonomy Hackaton

- IROS showcase: world models, image repair, data cleaning

- Trevor and Jordan win student research awards at ESWEEK

- Demos at HWCOE celebration and dean’s tailgate

- New preprint: online friction estimation for racing

- Ivan presents conservative perception abstractions at Allerton

- New preprint: unified V&V for vision systems

- Ivan talks about high-dimensional verification at USC

- New NSF project on verifiable safety under visual shifts

- New preprint: how safe will I be given what I saw?

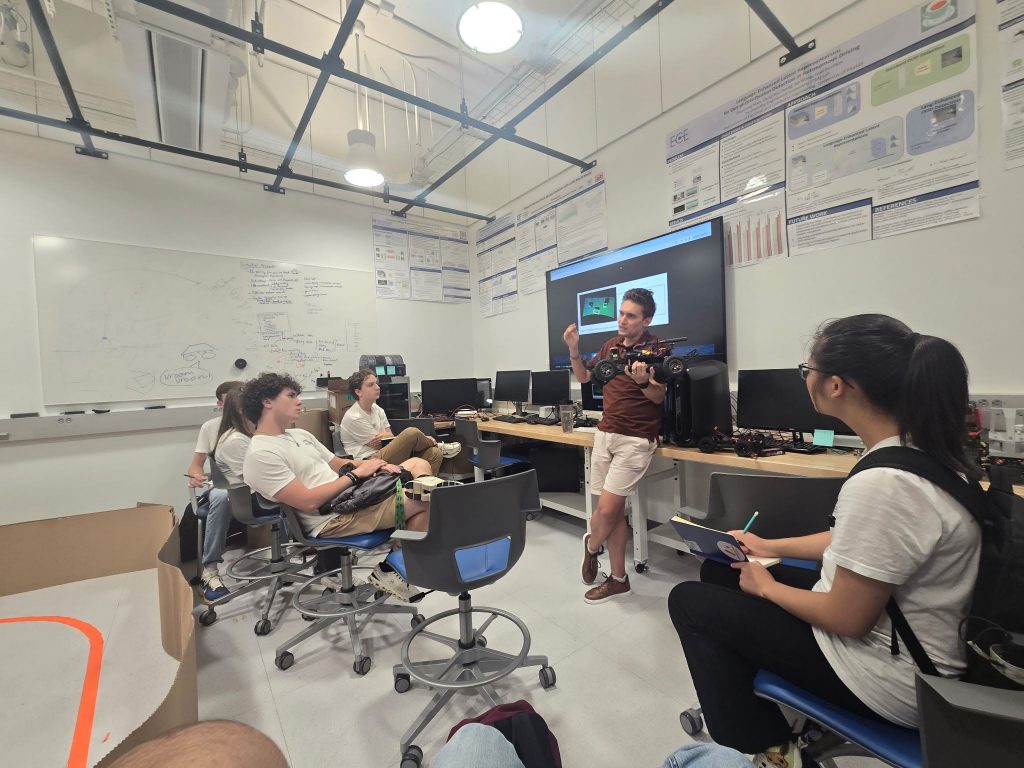

- TEA Lab hosts incoming freshmen

- Ivan talks about conformal reachability at CAV

- Our world models are taking off

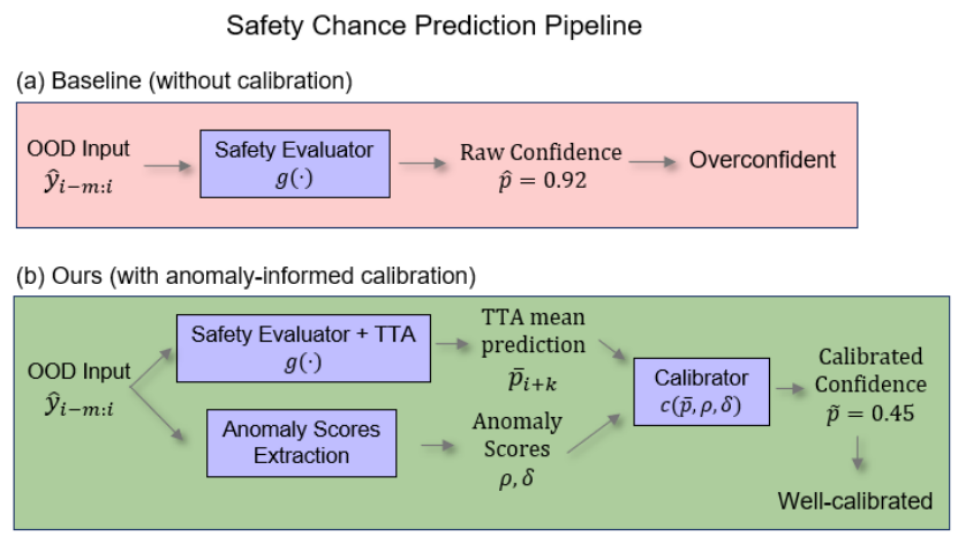

- New NSF project on confidence calibration under anomalies

- New preprint: chain-of-thought confidence with STL

- Ivan presents principles of world modeling at NeuS 2025

- Zhongzheng and Yuyang give racing demo to Nelms family

- Jordan & Ivan present world models at ICRA 2025

- Jordan and Ivan present at CPS-IoT Week 2025

- Lorant wins the student leader and best poster awards

- Carson & Lorant present at the UF Spring Symposium

- New preprint: conservative perception abstractions

- Ivan receives the NSF CAREER Award

- New preprint: generalizable image repair

- New preprint: principles for interpretable world models

- New preprint: state-based conformal prediction

- TEA lab does double racing demos for Spring Visit

- New preprint: stratified neuro-symbolic architecture

- Ivan co-chairs the poster/demo session at ICCPS 2025

- Ivan co-chairs the poster/demo session at ICCPS 2025

- New preprint: physically interpretable world models

- MANY posters, demos, awards at NELMS IoT conference

- Two surveys: neuro-symbolic AIoT and CPS sustainability

- Sam, Yuang, Zhenjiang present posters at UF AI Days 2024

- Ivan presents calibrated visual safety prediction at TACPS workshop at ESWEEK

- Yuang presents high-dimensional reachability at FM 2024

- Zhenjiang presents calibrated safety predictors at L4DC 2024

- New NSF project on neuro-symbolic perception in CPS

- Ivan spends summer at AFRL as a visiting faculty

- Zhenjiang presents two papers and a poster at ICRA 2024

- Ivan presents NN repair with preservation at ICCPS 2024

- Language-enhanced OOD detection: new preprint online

- F1/10 racing demo for the ECE External Advisory Board

- First batch of students finishes the CURE racing course

- Foundation world models: new preprint online

- TEA Lab moves to Malachowsky Hall

- Verifying high-dimensional controllers: new preprint online

- Ivan participates in a panel on dependable space autonomy

- Ivan serves on the PC of ICCPS’24 and AAAI’24

- How safe am I given what I see? New preprint online

- Invited talk at the DACPS workshop & ETH Autonomy Talks

- TEA Lab hosts K-12 students for the Robotics-AIoT Visit Day

- Causal NN controller repair presented at ICAA’23

- Conservative safety monitoring presented at NFM’23

- DonkeyCars are racing autonomously

- Ivan Ruchkin to serve on the PC of ICCPS’23

- TEA Lab is established

Recent News

- Ivan receives IEEE TCCPS Early Career Award

This award recognizes a junior researcher from either academia or industry who has demonstrated outstanding contributions to the field of cyber-physical systems (CPS) in the early stage of his/her career development.

Ivan was awarded ‘‘for contributions to rigorous assurance of learning-enabled cyber-physical systems, including their safety verification, trustworthy monitoring, and controller repair.’’

Big thanks to the IEEE Technical Committee on Cyber-Physical Systems (TCCPS) for recognizing our work!

- New preprint: anomaly-informed safety confidence

We developed a new safety prediction pipeline that leverages a vector of anomaly scores to predict the system’s safety confidence. Somehow, it manages to generalize to unseen anomalies in sensing and dynamics.

Citation:

- Zhenjiang presents confidences & world models at CPS Week

Zhenjiang Mao travelled all the way to Brittany in France to present his recent contributions at the CPS-IoT Week 2026.

First, Zhenjiang showcased his proposed PhD work on “Action Confidence Trajectories for Safety Assurance in Autonomous Systems” at the CPS-IoT Week PhD Forum. He gave a short pitch and then presented a poster, which was popular thanks to its unorthodox view of safety assurance.

Second, Zhenjiang presented “Physically Interpretable World Models via Weakly Supervised Representation Learning” in the HSCC/ICCPS main track. This paper targeted an exciting problem setting of learning interpretable state spaces with partial information and weak supervision. However, it also left many curiosities to explore and loose ends to chase in the future.

Citation:

- Zhenjiang Mao, Mrinall Eashaan Umasudhan, Ivan Ruchkin.

Physically Interpretable World Models via Weakly Supervised Representation Learning [Arxiv] [Slides] [Poster] [Demo] [Github].

In Proceedings of the 17th ACM/IEEE International Conference on Cyber-Physical Systems (ICCPS), Saint Malo, France, 2026.

- Zhenjiang Mao, Mrinall Eashaan Umasudhan, Ivan Ruchkin.

- New preprint: elimination for hypothesis testing

As a starting point towards using bandit-style algorithms for autonomy, we have developed finite-sample bounds for efficient hypothesis testing while eliminating unlikely hypotheses.

Citation:

- Ziyuan Lin, Hoang Ngoc Nguyen, Jie Xu, Ivan Ruchkin.

Finite-Sample Analysis of Elimination in Active Hypothesis Testing [Arxiv].

Preprint, 2026.

- Ziyuan Lin, Hoang Ngoc Nguyen, Jie Xu, Ivan Ruchkin.

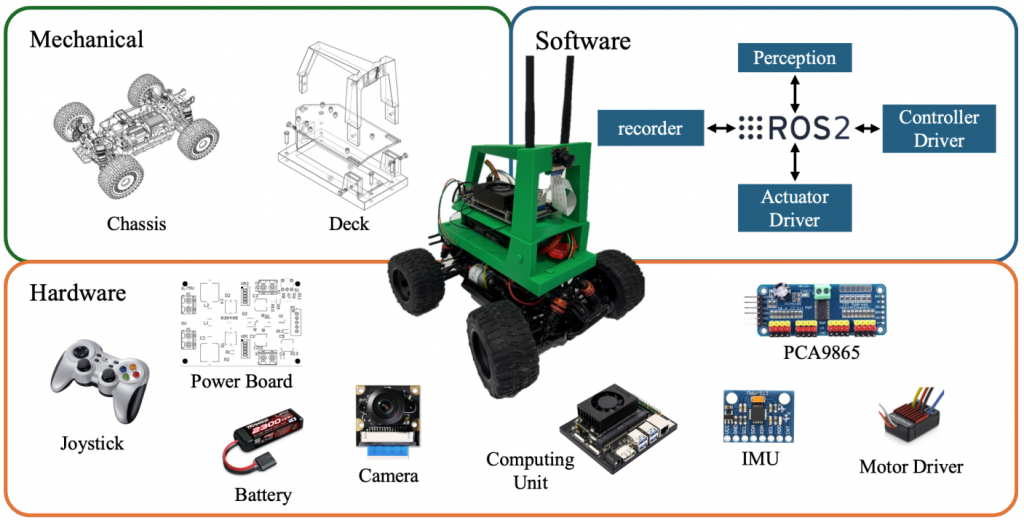

- New preprint: TEACar Autonomous Racing Platform

We have released our shiny new TEACar platform for autonomous racing, inspired by DonkeyCar but with upgraded mechanical, hardware, and software components!

Citation:

- Maxwell wins Outstanding Student Leadership Award

Maxwell Ruyle, a Mechanical Engineering undergraduate student, received the Bill “Roto” Reuter & Peter Nicholas Outstanding Student Leadership Award from the Department of Mechanical and Aerospace Engineering for leading activities in many contexts:

- A founding member of Gator Autonomous Racing and a lead of the GAR mechanical team

- Designer of the mechanical subsystem of the TEACar

- A founder of a startup focused on defense innovations.

- Industry chair of the UF ASME chapter

Congratulations!

- New preprint: a broad view of CPS resilience

Ivan took part in a large many-university effort to summarize the state of resilient cyber-physical systems (CPS) and the outlook for future research in this area. Five themes have emerged.

Citation:

- Saurabh Bagchi*, Hyunseung Kim*, Tarek Abdelzaher, Homa Alemzadeh, Somali Chaterji, Glen Chou, Yuying Duan, Fanxin Kong, Michael Lemmon, Yin Li, Mengyu Liu, Wenhao Luo, Meiyi Ma, Sibin Mohan, Ayan Mukhopadhyay, Melkior Ornik, Dimitra Panagou, Kristin Yvonne Rozier, Ivan Ruchkin, Huajie Shao, Sze Zheng Yong, Majid Zamani, Xugui Zhou.

Digital Guardians: The Past and The Future of Cyber-Physical Resilience [Arxiv]

In submission, 2026. * Co-first authors.

- Saurabh Bagchi*, Hyunseung Kim*, Tarek Abdelzaher, Homa Alemzadeh, Somali Chaterji, Glen Chou, Yuying Duan, Fanxin Kong, Michael Lemmon, Yin Li, Mengyu Liu, Wenhao Luo, Meiyi Ma, Sibin Mohan, Ayan Mukhopadhyay, Melkior Ornik, Dimitra Panagou, Kristin Yvonne Rozier, Ivan Ruchkin, Huajie Shao, Sze Zheng Yong, Majid Zamani, Xugui Zhou.

- Posters at Undergraduate Spring Symposium 2026

Congratulations to Trevor and Chris (and Vignesh) on their presentations of undergraduate-led research projects!

Featured above: Chris Oeltjen and Vignesh Saravanan with their defensive maneuvering work.

Featured below: Trevor Turnquist with his calibrated filtering work.

- Mathias wins ECE Undergrad Research Excellence Award

Congratulations to Mathias Gast on his well-deserved research award! In the TEA Lab, he has been working on soundly abstracting continuous systems, resulting in multiple paper submissions.

Looking forward to his upcoming contributions!

- New preprint: latent-entropy anomaly detection

In collaboration with ECE colleagues, we have put out a new variant of our unsupervised anomaly detection pipeline. This one uses a latent entropy loss to scramble the latent space, making anomalies harder to reconstruct (and hence easier to detect).

No supervision (including a normal-only dataset) needed!

Citation:

- Yuang Geng, Junkai Zhou, Kang Yang, Pan He, Zhuoyang Zhou, Jose C. Principe, Joel Harley, Ivan Ruchkin.

MLE-UVAD: Minimal Latent Entropy Autoencoder for Fully Unsupervised Video Anomaly Detection [Arxiv].

Preprint, 2026.

- Yuang Geng, Junkai Zhou, Kang Yang, Pan He, Zhuoyang Zhou, Jose C. Principe, Joel Harley, Ivan Ruchkin.

- Ivan presents LLM confidence calibration at Shonan

Ivan had the honor of attending an invitation-only visionary workshop #235 on LLM-guided assurance and synthesis for CPS in Shonan, Japan. He presented the lab’s work on calibrating chain-of-thought confidence by discovering temporal patterns with Signal Temporal Logic.

Materials:

Some of the prominent debates at the workshop included:

- What does the probability of LLM choices have to do with the probability of LLM mistakes?

- Are world models necessary for intent?

- Where do specifications for LLMs come from, and are they truly separate from data?

- What is the equivalence class of semantically valid formalizations of natural language?

- How to combine the perfection of formal methods and the magic of AI?

- How is the explainability of LLM states different from the explainability of LLM outputs?

- Should we prioritize syntactic or semantic robustness in reasoning?

- How to establish multifaceted connections between the modalities of sensing (camera/lidar data), reasoning (language, both natural and formal), and control (actions)?

- How do you expect the robot to clean dishes well if you did not teach it?

- Does Lean have enough support for future, not-yet-existent mathematics?

- What aspects of agent-based CPS engineering should we trust more, and which – less?

- Ivan named Malachowsky Family Endowed Rising Star

Big thanks to the Malachowsky Family for supporting our AI research! Also, congratulations to Alina and Yingying.

Onwards!

Links:

- New preprint: verifiable deterministic world models

Our exploration of world models for system assurance resulted in a semi-predictable but currently unfashionable choice: removing randomness and uncertainty from the latent space made world models more verifiable (although a tiny bit less picture-perfect). More surprisingly, this step made the behaviors produced by them more relevant to the real world.

As a result, we were able to transfer guarantees obtained on verifiable deterministic world models to the real image-based system. Intriguing!

Citation:

- STL CoT confidence = most innovative poster

Zhenjiang and Ani presented a poster with their work on chain-of-thought confidence with signal temporal logic at the Annual Nelms IoT Conference. The core idea of this research is to find patterns in LLM confidence that tend to correlate with correct and incorrect answers. Then, these patterns can be used to determine the confidence, i.e., the chance of the LLM providing the correct answer.

Zhenjiang and Ani at their poster They were awarded the Most Innovative Poster Award by UF Innovate, which included a $500 cash prize. Congratulations!

In the meantime, a couple of other fun events happened around that time:

Richard Yang and Sriram Yerramsetty gave a demo of autonomous Roboracer overtaking at the Nelms Conference

Gator Autonomous Racing hosted its first Build Day to start making a new crop of autonomous racing cars - New preprint: statistical-symbolic verification of perception

Our collaboration with RPI has yielded an extended and improved version of our NeuS’25 paper: combining conformal prediction for neural perception with reachability analysis for the dynamics and control. This problem required constructing a discrete abstraction of the perception neural net, which we did with a genetic algorithm.

Citation:

- Yuang Geng*, Thomas Waite*, Trevor Turnquist, Radoslav Ivanov†, Ivan Ruchkin†.

Statistical-Symbolic Verification of Perception-Based Autonomous Systems using State-Dependent Conformal Prediction. [Arxiv]

In submission to the ACM Transactions on Embedded Computing Systems (TECS), 2025. * Co-first authors. † Co-last authors.

- Yuang Geng*, Thomas Waite*, Trevor Turnquist, Radoslav Ivanov†, Ivan Ruchkin†.

- ECE showcases new club: Gator Autonomous Racing

This semester marks a major development: the Gator Autonomous Racing (GAR) student club/design team was officially spun off from the TEA Lab.

This week, the club has put together an impressive showcase with two racing cars (F1/tenth, aka RoboRacer) in the middle of Malachowsky Hall. The demonstration has attracted a lot of attention!

A huge thanks to these guys for putting the showcase together:

- New preprint: a survey of CPS assumptions

We’ve put in a big effort to find, categorize, and analyze assumptions and guarantees in papers on cyber-physical systems since 2014. Now we’re happy to release the results!

Citation:

- Chengyu Li, Saleh Faghfoorian, Ivan Ruchkin.

What Does It Take to Get Guarantees? Systematizing Assumptions in Cyber-Physical Systems [Arxiv].

Preprint, 2025.

We are also sharing our database of analyzed papers and assumptions.

- Not seeing your favorite paper there? Fill out the form to let us know, and we’ll consider including it into the survey (subject to the inclusion criteria).

- Chengyu Li, Saleh Faghfoorian, Ivan Ruchkin.

- Ivan does publicity for a neuro-symbolic conference

Ivan Ruchkin is serving as the publicity chair of the 3rd International Conference on Neuro-Symbolic Systems (NeuS) 2026.

Looking forward to your submissions!

- Jordan presents V&V for vision-based systems at ATVA

Jordan Peper went all the way to Bengaluru, India, to present our work (in collaboration with UIUC) on unified verification and validation of vision-based autonomy at the International Symposium on Automated Technology for Verification and Analysis (ATVA). Allegedly, this is a hot problem, but the abstraction is quite complex. That’s what it takes — for now.

Citation:

- Jordan Peper, Yan Miao, Sayan Mitra, and Ivan Ruchkin.

Towards Unified Probabilistic Verification and Validation of Vision-Based Autonomy [Arxiv] [Github].

In Proceedings of the International Symposium on Automated Technology for Verification and Analysis (ATVA), Bangalore, India, 2025.

- Jordan Peper, Yan Miao, Sayan Mitra, and Ivan Ruchkin.

- AutoGators win Most Innovative @ Autonomy Hackaton

Congratulations to the team AutoGators (Krish Kapadia, Yilin Liu, Zhenjiang Mao, Ishaan Sen, Zhongzheng Zhang, Zhuoyang Zhou) on winning the “Most Innovative Solution” Award ($10K) at the Mission Autonomy Hackathon organized by AWS and Vanderbilt.

Here is the problem they solved: “Given a swarm of autonomous aerial drones tracking a resupply convoy, use aerial imagery to dynamically detect threats along the convoy’s path and reroute the convoy to avoid them with minimal delay.”

:

Code:

- IROS showcase: world models, image repair, data cleaning

Ivan went all the way to Hangzhou, China, to present several research works on world models, image repair, and data cleaning.

- An invited talk “𝐑𝐞𝐥𝐢𝐚𝐛𝐥𝐞 𝐖𝐨𝐫𝐥𝐝 𝐌𝐨𝐝𝐞𝐥𝐬: 𝐏𝐡𝐲𝐬𝐢𝐜𝐚𝐥 𝐆𝐫𝐨𝐮𝐧𝐝𝐢𝐧𝐠 𝐚𝐧𝐝 𝐒𝐚𝐟𝐞𝐭𝐲 𝐏𝐫𝐞𝐝𝐢𝐜𝐭𝐢𝐨𝐧” at the Building Safe Robots: A Holistic Integrated View on Safety from Modelling, Control & Implementation Workshop (sponsored by NOKOV Motion Capture)

– Featuring the work by Zhenjiang Mao, Mrinall Umasudhan, and Jordan Peper - A paper presentation “𝐆𝐞𝐧𝐞𝐫𝐚𝐥𝐢𝐳𝐚𝐛𝐥𝐞 𝐈𝐦𝐚𝐠𝐞 𝐑𝐞𝐩𝐚𝐢𝐫 𝐟𝐨𝐫 𝐑𝐨𝐛𝐮𝐬𝐭 𝐕𝐢𝐬𝐮𝐚𝐥 𝐂𝐨𝐧𝐭𝐫𝐨𝐥”

– Featuring the work of Carson Sobolewski, Zhenjiang Mao, and Kshitij Maruti Vejre - A paper presentation “𝐔𝐧𝐬𝐮𝐩𝐞𝐫𝐯𝐢𝐬𝐞𝐝 𝐀𝐧𝐨𝐦𝐚𝐥𝐲 𝐃𝐞𝐭𝐞𝐜𝐭𝐢𝐨𝐧 𝐈𝐦𝐩𝐫𝐨𝐯𝐞𝐬 𝐈𝐦𝐢𝐭𝐚𝐭𝐢𝐨𝐧 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠 𝐟𝐨𝐫 𝐀𝐮𝐭𝐨𝐧𝐨𝐦𝐨𝐮𝐬 𝐑𝐚𝐜𝐢𝐧𝐠”

Featuring the work of Yuang Geng, Yang Zhou, Yuyang Zhang, Zhongzheng Zhang, Kang Yang, Tyler Ruble, and Giancarlo Vidal

Here are the paper citations on which these presentations were based:

- Carson Sobolewski, Zhenjiang Mao, Kshitij Vejre, Ivan Ruchkin.

Generalizable Image Repair for Robust Visual Autonomous Racing [Arxiv] [Poster 1] [Poster 2] [Github] [Video] [Slides].

In Proceedings of the International Conference on Intelligent Robots and Systems (IROS), Hangzhou, China, 2025. - Yuang Geng, Yang Zhou, Yuyang Zhang, Zhongzheng Ren Zhang, Kang Yang, Tyler Ruble, Giancarlo Vidal, and Ivan Ruchkin.

Unsupervised Anomaly Detection Improves Imitation Learning for Autonomous Racing [Poster] [Video] [Slides].

In Proceedings of the International Conference on Intelligent Robots and Systems (IROS), 2025. - Zhenjiang Mao, Mrinall Eashaan Umasudhan, Ivan Ruchkin.

How Safe Will I Be Given What I Saw? Calibrated Prediction of Safety Chances for Image-Controlled Autonomy [Arxiv] [Github].

In submission to the International Journal of Robotics Research (IJRR), 2025. - Zhenjiang Mao, Ivan Ruchkin.

Towards Physically Interpretable World Models: Meaningful Weakly Supervised Representations for Visual Trajectory Prediction [Arxiv] [Poster].

Preprint, 2025. - Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

Four Principles for Physically Interpretable World Models [Arxiv] [OpenReview] [Github] [Poster] [Slides].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-first authors.

- An invited talk “𝐑𝐞𝐥𝐢𝐚𝐛𝐥𝐞 𝐖𝐨𝐫𝐥𝐝 𝐌𝐨𝐝𝐞𝐥𝐬: 𝐏𝐡𝐲𝐬𝐢𝐜𝐚𝐥 𝐆𝐫𝐨𝐮𝐧𝐝𝐢𝐧𝐠 𝐚𝐧𝐝 𝐒𝐚𝐟𝐞𝐭𝐲 𝐏𝐫𝐞𝐝𝐢𝐜𝐭𝐢𝐨𝐧” at the Building Safe Robots: A Holistic Integrated View on Safety from Modelling, Control & Implementation Workshop (sponsored by NOKOV Motion Capture)

- Trevor and Jordan win student research awards at ESWEEK

Congrats to Trevor Turnquist and Jordan Peper on winning the First Undergraduate and Runner-Up Graduate Awards at the ACM Student Research Competition hosted at the Embedded Systems Week 2025!

Photos from the event:

The papers relevant to these competition submissions:

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

State-Dependent Conformal Perception Bounds for Neuro-Symbolic Verification of Autonomous Systems [Arxiv] [Poster] [Slides summary] [Slides talk] [Github].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-last authors. - Jordan Peper, Yan Miao, Sayan Mitra, and Ivan Ruchkin.

Towards Unified Probabilistic Verification and Validation of Vision-Based Autonomy [Arxiv] [Github].

In Proceedings of the International Symposium on Automated Technology for Verification and Analysis (ATVA), Bangalore, India, 2025.

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

- Demos at HWCOE celebration and dean’s tailgate

The TEA Lab and the newly formed Gator Autonomous Racing (GAR) club collaborated on two back-to-back demos:

- A camera-based DonkeyCar demo for the 10th year anniversary of the naming of UF engineering by Herbert Wertheim. This demo on October 3 was led by Zhongzheng Zhang and Tyler Ruble.

- A lidar-based RoboRacer demo for the Dean’s tailgate before the UF-UT Austin football game. This demo on October 4 was led by Ruben Gonzalez-Vera, Sriram Yerramsetty, Richard Yang, and Christopher Oeltjen.

Congrats to the students on successfully demoing our autonomous racing technology!

- New preprint: online friction estimation for racing

Our lab pushed out an experimental project on detecting slip and estimating the tire friction from the onboard sensors (lidar & IMU) on RoboRacer (aka F1/10) cars. No fancy models, no sophisticated data collection, no need for post-processing. It turned out pretty accurate!

- Ivan presents conservative perception abstractions at Allerton

Ivan talked about conservative abstractions of perception-driven systems at the University of Illinois Urbana-Champaign in the Allerton Conference. The rumor is that these abstractions are too conservative.

Citation:

- Matthew Cleaveland, Pengyuan Lu, Oleg Sokolsky, Insup Lee, Ivan Ruchkin.

Conservative Perception Models for Probabilistic Model Checking [Arxiv] [Github] [Slides].

In Proceedings of the Allerton Conference on Communication, Control, and Computing, Urbana, Illinois, 2025. Invited paper.

- Matthew Cleaveland, Pengyuan Lu, Oleg Sokolsky, Insup Lee, Ivan Ruchkin.

- New preprint: unified V&V for vision systems

In collaboration with UIUC researchers, we have developed a methodology to build uncertainty-aware models (imprecise Markov decision processes) of vision-guided autonomous systems. These models offer a unified methodology for their verification (to get safety guarantees) and validation (to quantify the applicability of these guarantees to the real world). Accepted at ATVA 2025, this paper is a step in the long journey towards more practical formal methods for real-world autonomy.

Citation:

- Jordan Peper, Yan Miao, Sayan Mitra, and Ivan Ruchkin.

Towards Unified Probabilistic Verification and Validation of Vision-Based Autonomy [Arxiv] [Github].

In Proceedings of the International Symposium on Automated Technology for Verification and Analysis (ATVA), Bangalore, India, 2025.

- Jordan Peper, Yan Miao, Sayan Mitra, and Ivan Ruchkin.

- Ivan talks about high-dimensional verification at USC

The talk included methods for dealing with the high dimensionality of perception and state space. Setting a duration record for Ivan’s research talks, it lasted for 90 minutes (thanks to many insightful questions!).

- New NSF project on verifiable safety under visual shifts

We are excited to start the VISUALS project: Verifiable Information-Theoretic Safety Under Augmented Latent Shifts, in collaboration with Yuheng Bu (UCSB) and Jose Principe (UF), sponsored by the NSF EPCN program.

This project aims to create an end-to-end methodology to model, analyze, quantify, detect, and adapt to changes in the visual environment of an autonomous system. It will bring together techniques and insights from formal methods, information theory, and uncertainty quantification.

- New preprint: how safe will I be given what I saw?

An extension of our modular family of learning-based safety predictors from L4DC 2024, now with transformers and quantization!

Citation:

- Zhenjiang Mao, Mrinall Eashaan Umasudhan, Ivan Ruchkin.

How Safe Will I Be Given What I Saw? Calibrated Prediction of Safety Chances for Image-Controlled Autonomy [Arxiv] [Github].

In submission to the International Journal of Robotics Research (IJRR), 2025.

- Zhenjiang Mao, Mrinall Eashaan Umasudhan, Ivan Ruchkin.

- TEA Lab hosts incoming freshmen

This summer, the TEA Lab welcomed a group of incoming UF freshmen as part of the STEPUP (the Successful Transition and Enhanced Preparation for Undergraduates program). The visiting students learned about the importance of safe and trustworthy autonomy, got to poke around the autonomous racing cars, and asked great questions!

- Ivan talks about conformal reachability at CAV

Ivan went all the way to Croatia to tell people how to put conformal prediction in a closed loop at the International Conference on Computer-Aided Verification (CAV). Doing so would let you verify autonomous systems with neural networks of any size (yes, even a VLA model like RT-2!).

The decisive question is, to apply conformal prediction at the perception level or at the control level?

- If you apply conformal prediction at the perception level, you are making bounds on perception error. If so, it is wise to take into account how this error changes both over state and over time. Then state-based conformal prediction is at your service, per Ivan’s talk at the Third Workshop on Trustworthy Autonomous Cyber-Physical Systems (TACPS).

Citation:- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

State-Dependent Conformal Perception Bounds for Neuro-Symbolic Verification of Autonomous Systems [Arxiv] [Poster] [Slides summary] [Slides talk].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-last authors.

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

- If you apply conformal prediction at the control level, then you have a whole menu of options: single-step vs trajectory level, states vs actions. Turns out all these options lead to slightly different guarantees to bridge the gap between high dimensions (images) and low dimensions (states). These insights found their way into the poster that Ivan presented at the International Symposium on AI Verification (SAIV).

Citation:- Yuang Geng, Jake Brandon Baldauf, Souradeep Dutta, Chao Huang, Ivan Ruchkin.

Bridging Dimensions: Confident Reachability for High-Dimensional Controllers [Arxiv] [Springer] [Github] [Poster 1] [Poster 2] [Slides] [Demo (w/ subs)] [Demo (w/o subs)] [Talk].

In Proceedings of the International Symposium on Formal Methods (FM), Milan, Italy, 2024.

- Yuang Geng, Jake Brandon Baldauf, Souradeep Dutta, Chao Huang, Ivan Ruchkin.

Photo credit: Taylor Johnson - If you apply conformal prediction at the perception level, you are making bounds on perception error. If so, it is wise to take into account how this error changes both over state and over time. Then state-based conformal prediction is at your service, per Ivan’s talk at the Third Workshop on Trustworthy Autonomous Cyber-Physical Systems (TACPS).

- Our world models are taking off

Our recent dive into world models is blossoming in several intriguing directions: multimodality, hallucinations, and modular verification. While we’re pushing these directions forward, take a look at a nice overview article about our research on world models.

- New NSF project on confidence calibration under anomalies

The last decade has seen a flourishing of detection capabilities for various anomalies and out-of-distribution samples. However, the question of what an autonomous system should do after it detects an anomaly is incredibly challenging and seems to be nowhere near a satisfying answer.

A reasonable step after detecting an anomaly is to figure out, in as much detail as possible, how much it affects the operation of the system: (a) How much are the sensing, perception, planning, control, or the environment affected? (b) How much are systemic properties, like safety, affected?

This new NSF CPS project will develop a framework called Methodology for Anomalous Safety Confidence (MASC). This framework will adjust (on the fly) the system’s confidence in its own safety/correctness based on the anomalies that it is detecting. Looking forward to the exciting research ahead!

Links:

- NSF award page

- UF press release

- Confidence composition research that inspired this project

- New preprint: chain-of-thought confidence with STL

We converted a SAS course project to a workshop paper about how to calibrate the confidence in chain-of-thought reasoning using a temporal logic formula:

- Zhenjiang Mao, Artem Bisliouk, Rohith Reddy Nama, Ivan Ruchkin.

Temporalizing Confidence: Evaluation of Chain-of-Thought Reasoning with Signal Temporal Logic [Arxiv]

In 20th Workshop on Innovative Use of NLP for Building Educational Applications (BEA), 2025.

Stay tuned for more!

- Zhenjiang Mao, Artem Bisliouk, Rohith Reddy Nama, Ivan Ruchkin.

- Ivan presents principles of world modeling at NeuS 2025

Ivan revisited his old grazing grounds in Philly to present 4 principles for making world models more physically grounded. There was an intense discussion of whether purely symbolic simulators should count as generative world models.

Citation:

- Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

Four Principles for Physically Interpretable World Models [Arxiv] [OpenReview] [Github] [Poster] [Slides].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-first authors.

In the meantime, the RPI collaborators Thomas and Rado presented a joint work on state-based conformal prediction.

Citation:

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

State-Dependent Conformal Perception Bounds for Neuro-Symbolic Verification of Autonomous Systems [Arxiv] [Poster] [Slides summary] [Slides talk].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-last authors.

- Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

- Zhongzheng and Yuyang give racing demo to Nelms family

Thanks to Yuyang and Zhongzheng for impressing the visitors from the generous Nelms family on a new racing track!

Thanks to Yuyang and Zhongzheng for impressing the visitors from the generous Nelms family on a new racing track! - Jordan & Ivan present world models at ICRA 2025

To the audience’s excitement, Jordan and Ivan presented the lab’s work on principles of physically interpretable work models in two capacities:

- As a spotlight poster at the Workshop on Foundation Models and Neuro-Symbolic AI for Robotics (FMNS)

- As a late-breaking result poster in the main ICRA conference.

Citation:

- Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

Four Principles for Physically Interpretable World Models [Arxiv] [OpenReview] [Github] [Poster].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-first authors.

- Jordan and Ivan present at CPS-IoT Week 2025

Several events transpired at the CPS-IoT Week in Irvine, CA:

Several events transpired at the CPS-IoT Week in Irvine, CA:- Jordan presented his poster (pictured) on probabilistic verification & validation at HSCC.

- Ivan presented his collaborative work on imprecise neural networks at HSCC.

- Ivan chaired the ICCPS poster/demo session and a couple of paper sessions, and also judged posters in the PhD forum.

- Lorant wins the student leader and best poster awards

Congratulations to Lorant Domokos on winning two (!) awards from the Department of Mechanical and Aerospace Engineering (MAE) at the end of his senior year:

- 2025 MAE Student Leadership Award

- 1st Place Undergraduate Poster at the 2025 MAE Poster Competition

- (The poster on drift detection and friction estimation can be found here.)

- Carson & Lorant present at the UF Spring Symposium

Carson Sobolewski and Lorant Domokos presented their posters at the UF Spring Undergraduate Research Symposium 2025 as part of their scholarship programs: Allegedly, Chris Oeltjen was also in attendance.

Carson Sobolewski and Lorant Domokos presented their posters at the UF Spring Undergraduate Research Symposium 2025 as part of their scholarship programs: Allegedly, Chris Oeltjen was also in attendance. - New preprint: conservative perception abstractions

A new preprint is out on low-dimensional symbolic models of deep visual perception that enable conservative (i.e., non-overconfident) safety analysis. Citation:

A new preprint is out on low-dimensional symbolic models of deep visual perception that enable conservative (i.e., non-overconfident) safety analysis. Citation:- Matthew Cleaveland, Pengyuan Lu, Oleg Sokolsky, Insup Lee, Ivan Ruchkin. Conservative Perception Models for Probabilistic Model Checking [Arxiv]. Preprint, 2025.

- Ivan receives the NSF CAREER Award

While everyone and their brother are chasing guarantees for autonomous systems, their assumptions are being overlooked. Ivan got the prestigious NSF CAREER grant to fix that problem: the new project will focus on the careful understanding, modeling, validation, and monitoring of important assumptions in learning-based autonomous systems. More:

While everyone and their brother are chasing guarantees for autonomous systems, their assumptions are being overlooked. Ivan got the prestigious NSF CAREER grant to fix that problem: the new project will focus on the careful understanding, modeling, validation, and monitoring of important assumptions in learning-based autonomous systems. More: - New preprint: generalizable image repair

- New preprint: principles for interpretable world models

Our new paper articulates four key principles for physical interpretability of world models. We paint a broader picture on neuro-symbolic world models, beyond our recent preprint on a specific technique for physically interpretable world models for trajectory prediction.

Update: accepted and presented at NeuS 2025! It also got publicized at ICRA.

Citation:

- Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

Four Principles for Physically Interpretable World Models [Arxiv] [OpenReview] [Github] [Poster] [Slides].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-first authors.

- Jordan Peper*, Zhenjiang Mao*, Yuang Geng, Siyuan Pan, Ivan Ruchkin.

- New preprint: state-based conformal prediction

Our first collaborative paper on the NSF Neuro-Symbolic Bridge project with RPI is online! It develops a novel way to get tight conformal prediction bounds on perception error in order to improve the accuracy of reachability verification.

Update: published and presented at NeuS’25!

Citation:

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

State-Dependent Conformal Perception Bounds for Neuro-Symbolic Verification of Autonomous Systems [Arxiv] [Poster] [Slides summary] [Slides talk] [Github].

In Proceedings of the 2nd International Conference on Neuro-symbolic Systems (NeuS), Philadelphia, PA, 2025. * Co-last authors.

- Thomas Waite, Yuang Geng, Trevor Turnquist, Ivan Ruchkin*, and Radoslav Ivanov*.

- TEA lab does double racing demos for Spring Visit

Great job to those who put together the demos for the ECE and MAE Spring Visits, particularly Zhongzheng and the F1/10 team!

Great job to those who put together the demos for the ECE and MAE Spring Visits, particularly Zhongzheng and the F1/10 team! - New preprint: stratified neuro-symbolic architecture

Check out our nice and short position paper. The key idea is to intermingle neural components and symbolic knowledge at each level of the autonomy stack.

Update: published in FSE’25!

Citation:

- Xi Zheng, Ziyang Li, Ivan Ruchkin, Ruzica Piskac, Miroslav Pajic.

NeuroStrata: Harnessing Neurosymbolic Paradigms for Improved Design, Testability, and Verifiability of Autonomous CPS [Arxiv] [Slides].

In Proceedings of the International Conference on the Foundations of Software Engineering (FSE) – Ideas, Visions and Reflections Track (IVR), Trondheim, Norway, 2025.

- Xi Zheng, Ziyang Li, Ivan Ruchkin, Ruzica Piskac, Miroslav Pajic.

- Ivan co-chairs the poster/demo session at ICCPS 2025

The International Conference on Cyber-Physical Systems (ICCPS) 2025 is seeking poster and demo submissions for its 16th iteration, in Irvine, CA. Details can be found here.

The International Conference on Cyber-Physical Systems (ICCPS) 2025 is seeking poster and demo submissions for its 16th iteration, in Irvine, CA. Details can be found here. - Ivan co-chairs the poster/demo session at ICCPS 2025

The International Conference on Cyber-Physical Systems (ICCPS) 2025 is seeking poster and demo submissions for its 16th iteration, in Irvine, CA. Details can be found here.

The International Conference on Cyber-Physical Systems (ICCPS) 2025 is seeking poster and demo submissions for its 16th iteration, in Irvine, CA. Details can be found here. - New preprint: physically interpretable world models

Our recent preprint develops an architecture and a training method to give latent states physical meaning in the context of trajectory prediction:

- Zhenjiang Mao, Ivan Ruchkin.

Towards Physically Interpretable World Models: Meaningful Weakly Supervised Representations for Visual Trajectory Prediction [Arxiv] [Poster].

Preprint, 2024.

- Zhenjiang Mao, Ivan Ruchkin.

- MANY posters, demos, awards at NELMS IoT conference

Congratulations to many students from TEA lab presenting their work and getting recognition!

Demos

Mrinall and Sam show off their DonkeyCar

Mrinall and Sam show off their DonkeyCar

Lorant, Carson, Chris, and Anthony show off their F1/10 car

Lorant, Carson, Chris, and Anthony show off their F1/10 car Posters

Sam and his work on language-enhanced OOD detection

Yuang and his work on high-dimensional verification

Zhenjiang and his work on interpretable world models Awards

First place for the F1/10 demo

First place for the F1/10 demo

First place for the F1/10 demo

2nd Place for Zhenjiang’s poster

2nd Place for Zhenjiang’s poster

2nd Place for Zhenjiang’s poster

Honorable Mention for Sam’s poster

Honorable Mention for Sam’s poster

Honorable Mention for Yuang’s poster - Two surveys: neuro-symbolic AIoT and CPS sustainability

- Zhen Lu, Imran Afridi, Hong Jin Kang, Ivan Ruchkin, Xi Zheng. Surveying Neuro-Symbolic Approaches for Reliable Artificial Intelligence of Things [Springer]. In Springer Journal of Reliable Intelligent Environments (JRIE), 2024.

- Ankica Barišić, Jácome Cunha, Ivan Ruchkin, Ana Moreira, João Araújo, Moharram Challenger, Dušan Savić, Vasco Amaral. Modelling Sustainability in Cyber-Physical Systems: a Systematic Mapping Study [Elsevier]. In Elsevier Sustainable Computing: Informatics and Systems (SUSCOM), 2024.

- Sam, Yuang, Zhenjiang present posters at UF AI Days 2024

On October 29, 2024, the three students presented posters about the following papers:

On October 29, 2024, the three students presented posters about the following papers:- Zhenjiang Mao, Dong-You Jhong, Ao Wang, Ivan Ruchkin. Language-Enhanced Latent Representations for Out-of-Distribution Detection in Autonomous Driving [Arxiv] [Slides]. Robot Trust for Symbiotic Societies (RTSS) Workshop (co-located with ICRA 2024), Yokohama, Japan, 2024.

- Zhenjiang Mao, Siqi Dai, Yuang Geng, Ivan Ruchkin. Zero-shot Safety Prediction for Autonomous Robots with Foundation World Models [Arxiv] [Poster]. Back to the Future: Robot Learning Going Probabilistic Workshop (co-located with ICRA 2024), Yokohama, Japan, 2024.

- Yuang Geng, Jake Brandon Baldauf, Souradeep Dutta, Chao Huang, Ivan Ruchkin. Bridging Dimensions: Confident Reachability for High-Dimensional Controllers [Arxiv] [Springer] [Github] [Poster 1] [Poster 2] [Slides] [Demo (w/ subs)] [Demo (w/o subs)] [Talk]. In Proceedings of the International Symposium on Formal Methods (FM), Milan, Italy, 2024.

- Ivan presents calibrated visual safety prediction at TACPS workshop at ESWEEK

Ivan gave an invited talk “How Safe Will I Be Given What I See? Calibrated Visual Safety Chance Prediction with (Foundation) World Models”. The discussion was very active and generated sufficient questions for the rest of Zhenjiang’s PhD. Relevant links: Talk abstract: In safety-critical autonomous systems, safety prediction traditionally relies on low-dimensional data with specific physical meanings, such as poses and velocities. However, such data is not always available, which leaves only high-dimensional sensor observations, such as images from cameras or LiDAR scans, and makes safety prediction increasingly challenging. This talk reports on the recent techniques for using high-dimensional observation data for safety prediction; at the heart of these techniques is the notion of a world model, which can predict future observations without meaningful low-dimensional data. We present several world models implemented with neural representation learning as well as foundation models for image segmentation and natural language prediction. Additionally, we propose a novel uncertainty quantification technique that combines confidence calibration with conformal prediction.

Ivan gave an invited talk “How Safe Will I Be Given What I See? Calibrated Visual Safety Chance Prediction with (Foundation) World Models”. The discussion was very active and generated sufficient questions for the rest of Zhenjiang’s PhD. Relevant links: Talk abstract: In safety-critical autonomous systems, safety prediction traditionally relies on low-dimensional data with specific physical meanings, such as poses and velocities. However, such data is not always available, which leaves only high-dimensional sensor observations, such as images from cameras or LiDAR scans, and makes safety prediction increasingly challenging. This talk reports on the recent techniques for using high-dimensional observation data for safety prediction; at the heart of these techniques is the notion of a world model, which can predict future observations without meaningful low-dimensional data. We present several world models implemented with neural representation learning as well as foundation models for image segmentation and natural language prediction. Additionally, we propose a novel uncertainty quantification technique that combines confidence calibration with conformal prediction. - Yuang presents high-dimensional reachability at FM 2024

Yuang Geng presented his work on reachability for vision-based neural-network controllers at the 26th International Symposium on Formal Methods (FM). Reportedly, the attendees are curious about the mapping between states and images.

Citation and further materials:

- Yuang Geng, Jake Brandon Baldauf, Souradeep Dutta, Chao Huang, Ivan Ruchkin.

Bridging Dimensions: Confident Reachability for High-Dimensional Controllers [Arxiv] [Springer] [Github] [Poster 1] [Poster 2] [Slides] [Demo (w/ subs)] [Demo (w/o subs)] [Talk].

In Proceedings of the International Symposium on Formal Methods (FM), Milan, Italy, 2024.

- Yuang Geng, Jake Brandon Baldauf, Souradeep Dutta, Chao Huang, Ivan Ruchkin.

- Zhenjiang presents calibrated safety predictors at L4DC 2024

Zhenjiang Mao presented his work on learning-enabled safety prediction (poster, paper) at the 6th Annual Conference on Learning for Decision and Control (L4DC 2024) in Oxford, UK. Reportedly, the attendees like math more than he does. Citation:

Zhenjiang Mao presented his work on learning-enabled safety prediction (poster, paper) at the 6th Annual Conference on Learning for Decision and Control (L4DC 2024) in Oxford, UK. Reportedly, the attendees like math more than he does. Citation:- Zhenjiang Mao, Carson Sobolewski, Ivan Ruchkin. How Safe Am I Given What I See? Calibrated Prediction of Safety Chances for Image-Controlled Autonomy [PMLR] [Arxiv] [Poster 2023] [Poster 2024] [Github]. In Proceedings of the Annual Learning for Dynamics & Control Conference (L4DC), Oxford, UK, 2024.

- New NSF project on neuro-symbolic perception in CPS

The new project is named “Neuro-Symbolic Bridge: From Perception to Estimation & Control“. Its goal is to develop a neuro-symbolic calibration framework to repair the mismatch between perception neural networks and downstream cyber-physical tasks such as state estimation and control. It will be carried out in collaboration with Radoslav Ivanov at RPI. More information: ECE website, NSF website. Update: our poster summarizes the first year of progress at the NSF CPS PI meeting.

The new project is named “Neuro-Symbolic Bridge: From Perception to Estimation & Control“. Its goal is to develop a neuro-symbolic calibration framework to repair the mismatch between perception neural networks and downstream cyber-physical tasks such as state estimation and control. It will be carried out in collaboration with Radoslav Ivanov at RPI. More information: ECE website, NSF website. Update: our poster summarizes the first year of progress at the NSF CPS PI meeting. - Ivan spends summer at AFRL as a visiting faculty

For Summer 2024, Ivan Ruchkin will join the Visiting Faculty Research Program (VFRP) at the Air Force Research Laboratory (AFRL) Information Directorate (RI) in Rome, NY. The program is organized by the Griffis Institute. Ivan will work on advancing safety verification for high-dimensional controllers. He will also participate in the cyber assurance group’s efforts on testing and assurance for learning-enabled systems.

For Summer 2024, Ivan Ruchkin will join the Visiting Faculty Research Program (VFRP) at the Air Force Research Laboratory (AFRL) Information Directorate (RI) in Rome, NY. The program is organized by the Griffis Institute. Ivan will work on advancing safety verification for high-dimensional controllers. He will also participate in the cyber assurance group’s efforts on testing and assurance for learning-enabled systems. - Zhenjiang presents two papers and a poster at ICRA 2024

The papers were on foundation world models and language-enhanced OOD. The audience response was, reportedly, positive and encouraged the implementation on physical robotic systems. Citations:

- Zhenjiang Mao, Dong-You Jhong, Ao Wang, Ivan Ruchkin. Language-Enhanced Latent Representations for Out-of-Distribution Detection in Autonomous Driving [Arxiv] [Slides]. Robot Trust for Symbiotic Societies (RTSS) Workshop (co-located with ICRA 2024), Yokohama, Japan, 2024.

- Zhenjiang Mao, Siqi Dai, Yuang Geng, Ivan Ruchkin. Zero-shot Safety Prediction for Autonomous Robots with Foundation World Models [Arxiv] [Poster]. Late-breaking results posters at ICRA 2024 and Back to the Future: Robot Learning Going Probabilistic Workshop (co-located with ICRA 2024), Yokohama, Japan, 2024.

- Ivan presents NN repair with preservation at ICCPS 2024

In the first presentation of ICCPS 2024, Ivan showcased a method to repair a neural network controller while preserving its verification results. Citation:

In the first presentation of ICCPS 2024, Ivan showcased a method to repair a neural network controller while preserving its verification results. Citation:- Pengyuan Lu, Matthew Cleaveland, Oleg Sokolsky, Insup Lee, Ivan Ruchkin. Repairing Learning-Enabled Controllers While Preserving What Works [Arxiv] [Github] [Slides]. In Proceedings of the International Conference on Cyber-Physical Systems (ICCPS), Hong Kong, China, 2024.

- Language-enhanced OOD detection: new preprint online

Our paper gives users of autonomous cars the ability to describe in natural language what conditions they consider nominal or anomalous.

Citation:

- Zhenjiang Mao, Dong-You Jhong, Ao Wang, Ivan Ruchkin. Language-Enhanced Latent Representations for Out-of-Distribution Detection in Autonomous Driving [arxiv], Preprint 2024.

- F1/10 racing demo for the ECE External Advisory Board

Industry leaders visited the ECE department to witness the variety of work happening here. Thanks to everyone who helped, especially Carson Sobolewski and Lorant Domokos who led the demonstration. Some videos and photos from the event:

Industry leaders visited the ECE department to witness the variety of work happening here. Thanks to everyone who helped, especially Carson Sobolewski and Lorant Domokos who led the demonstration. Some videos and photos from the event:

- First batch of students finishes the CURE racing course

Congratulations to the nine freshmen participants: Ramsey Makan, Jonas Dickens, Tyler Ruble, Christopher Oeltjen, Carter Amaba, Aditya Gandhi, Emilia Delaune, Ethan Krol, and Giancarlo Vidal! And a big thank you to the mentors: Ao Wang, Sam Jhong, Lorant Domokos, and Carson Sobolewski. More information on this CURE course is here.

Congratulations to the nine freshmen participants: Ramsey Makan, Jonas Dickens, Tyler Ruble, Christopher Oeltjen, Carter Amaba, Aditya Gandhi, Emilia Delaune, Ethan Krol, and Giancarlo Vidal! And a big thank you to the mentors: Ao Wang, Sam Jhong, Lorant Domokos, and Carson Sobolewski. More information on this CURE course is here. - Foundation world models: new preprint online

Our paper develops training-free world models based on foundation models with interpretable latent states.

Update: presented at the probabilistic robotics workshop at ICRA’24.

Citation:

- Zhenjiang Mao, Siqi Dai, Yuang Geng, Ivan Ruchkin.

Zero-shot Safety Prediction for Autonomous Robots with Foundation World Models [Arxiv] [Poster].

Back to the Future: Robot Learning Going Probabilistic Workshop (co-located with ICRA 2024), Yokohama, Japan, 2024.

- Zhenjiang Mao, Siqi Dai, Yuang Geng, Ivan Ruchkin.

- TEA Lab moves to Malachowsky Hall

Now found in Malachowsky 4100, with a brand new racing track coming soon!

Now found in Malachowsky 4100, with a brand new racing track coming soon! - Verifying high-dimensional controllers: new preprint online

- Ivan participates in a panel on dependable space autonomy

- Ivan serves on the PC of ICCPS’24 and AAAI’24

Consider submitting your papers there.

Consider submitting your papers there. - How safe am I given what I see? New preprint online

Update: a poster was presented at UF AI Days 2023. This paper develops safety chance prediction for image-controlled autonomous systems with calibration guarantees. Citation:

Update: a poster was presented at UF AI Days 2023. This paper develops safety chance prediction for image-controlled autonomous systems with calibration guarantees. Citation:- Zhenjiang Mao, Carson Sobolewski, Ivan Ruchkin. How Safe Am I Given What I See? Calibrated Prediction of Safety Chances for Image-Controlled Autonomy [arxiv]. Preprint, in submission.

- Invited talk at the DACPS workshop & ETH Autonomy Talks

Update 1: an extended version of this talk was given at a UF MAE Affiliate Seminar. The recording can be found here (UF login required). Update 2: another version of this walk was given at the ETH Autonomy Talks (video). Update 3: yet another version of this talks was given as a CNEL Seminar. The talk titled “Verify-then-Monitor: Calibration Guarantees for Safety Confidence” (see the slides here) was presented at the Sixth International Workshop on Design Automation for Cyber-Physical Systems (DACPS), part of the Design Automation Conference (DAC) 2023. Abstract: Autonomous cyber-physical systems (CPS) are increasingly deployed in complex and safety-critical environments. To help CPS interact with such environments, learning-enabled components, such as neural networks, often implement perception and control functions. Unfortunately, the complexity of the environments and learning components is a major challenge to ensuring the safety of CPS. An emerging assurance paradigm prescribes verifying as much of the CPS as possible at design time – and then monitoring the probability of safety at run time in case of unexpected situations. How can we guarantee that the monitor produces a probability that is well-calibrated to the true chance of safety? This talk will overview our recent answers in two settings. The first combines Bayesian filtering with probabilistic model checking of Markov decision processes. The second is based on confidence monitoring of assumptions behind closed-loop neural-network verification.

Update 1: an extended version of this talk was given at a UF MAE Affiliate Seminar. The recording can be found here (UF login required). Update 2: another version of this walk was given at the ETH Autonomy Talks (video). Update 3: yet another version of this talks was given as a CNEL Seminar. The talk titled “Verify-then-Monitor: Calibration Guarantees for Safety Confidence” (see the slides here) was presented at the Sixth International Workshop on Design Automation for Cyber-Physical Systems (DACPS), part of the Design Automation Conference (DAC) 2023. Abstract: Autonomous cyber-physical systems (CPS) are increasingly deployed in complex and safety-critical environments. To help CPS interact with such environments, learning-enabled components, such as neural networks, often implement perception and control functions. Unfortunately, the complexity of the environments and learning components is a major challenge to ensuring the safety of CPS. An emerging assurance paradigm prescribes verifying as much of the CPS as possible at design time – and then monitoring the probability of safety at run time in case of unexpected situations. How can we guarantee that the monitor produces a probability that is well-calibrated to the true chance of safety? This talk will overview our recent answers in two settings. The first combines Bayesian filtering with probabilistic model checking of Markov decision processes. The second is based on confidence monitoring of assumptions behind closed-loop neural-network verification. - TEA Lab hosts K-12 students for the Robotics-AIoT Visit Day

On June 15, 2023, the UF ECE Department hosted ~30 school students from the Westwood Middle School and Buchholz High School for a day visit at the Robotics, AI, and IoT research laboratories for educational presentations, research demonstrations, and mentoring discussions. It was a lot of fun for everyone!

Photos from the event Kudos to the other participating labs: SmartDATA Lab, RoboPI Lab, WISE Lab

- Causal NN controller repair presented at ICAA’23

Shown above is a 5-step workflow of our causal repair: (1) Extract the behaviors of a learning component as an I/O table. (2) Encode the dependency of the desired property outcome on the I/O behaviors with a Halpern-Pearl model. (3) Search for a counterfactual model value assignment, revealing an actual cause and a repair. (4) Decode the found assignment as a counterfactual component behavior. (5) Replace the original learning component with a repaired component that performs this counterfactual behavior to fix the system. Citation:

Shown above is a 5-step workflow of our causal repair: (1) Extract the behaviors of a learning component as an I/O table. (2) Encode the dependency of the desired property outcome on the I/O behaviors with a Halpern-Pearl model. (3) Search for a counterfactual model value assignment, revealing an actual cause and a repair. (4) Decode the found assignment as a counterfactual component behavior. (5) Replace the original learning component with a repaired component that performs this counterfactual behavior to fix the system. Citation:- Pengyuan Lu, Ivan Ruchkin, Matthew Cleaveland, Oleg Sokolsky, Insup Lee. Causal Repair of Learning-Enabled Cyber-Physical Systems [ArXiv]. In Proceedings of the International Conference on Assured Autonomy (ICAA), Baltimore, MD, 2023.

- Conservative safety monitoring presented at NFM’23

Shown above is our conservative monitoring approach that leverages probabilistic reachability offline and combines it with calibrated state estimation. Citation:

Shown above is our conservative monitoring approach that leverages probabilistic reachability offline and combines it with calibrated state estimation. Citation:- Matthew Cleaveland, Oleg Sokolsky, Insup Lee, Ivan Ruchkin. Conservative Safety Monitors of Stochastic Dynamical Systems [ArXiv] [Springer] [Slides]. In Proceedings of the NASA Formal Methods Symposium (NFM), Houston, TX, 2023.

- DonkeyCars are racing autonomously

Our lab is now running neural network-controlled racing cars based on raw camera images: Sometimes things don’t go as planned: Such is the brittle nature of deep learning. We’ll be working on predicting and preventing such accidents.

Our lab is now running neural network-controlled racing cars based on raw camera images: Sometimes things don’t go as planned: Such is the brittle nature of deep learning. We’ll be working on predicting and preventing such accidents. - Ivan Ruchkin to serve on the PC of ICCPS’23

- TEA Lab is established

TEA lab’s mission is to develop engineering methodologies for safe autonomous systems that are aware of their own limitations, as illustrated above. More details about this vision can be found in this slide deck.

TEA lab’s mission is to develop engineering methodologies for safe autonomous systems that are aware of their own limitations, as illustrated above. More details about this vision can be found in this slide deck.