Category: News

-

Ivan does publicity for a neuro-symbolic conference

—

Ivan Ruchkin is serving as the publicity chair of the 3rd International Conference on Neuro-Symbolic Systems (NeuS) 2026. Looking forward to your submissions!

-

Jordan presents V&V for vision-based systems at ATVA

—

Jordan Peper went all the way to Bengaluru, India, to present our work (in collaboration with UIUC) on unified verification and validation of vision-based autonomy at the International Symposium on Automated Technology for Verification and Analysis (ATVA). Allegedly, this is a hot problem, but the abstraction is quite complex. That’s what it takes — for…

-

AutoGators win Most Innovative @ Autonomy Hackaton

—

Congratulations to the team AutoGators (Krish Kapadia, Yilin Liu, Zhenjiang Mao, Ishaan Sen, Zhongzheng Zhang, Zhuoyang Zhou) on winning the “Most Innovative Solution” Award ($10K) at the Mission Autonomy Hackathon organized by AWS and Vanderbilt. Here is the problem they solved: “Given a swarm of autonomous aerial drones tracking a resupply convoy, use aerial imagery to…

-

IROS showcase: world models, image repair, data cleaning

—

Ivan went all the way to Hangzhou, China, to present several research works on world models, image repair, and data cleaning. Here are the paper citations on which these presentations were based:

-

Trevor and Jordan win student research awards at ESWEEK

—

Congrats to Trevor Turnquist and Jordan Peper on winning the First Undergraduate and Runner-Up Graduate Awards at the ACM Student Research Competition hosted at the Embedded Systems Week 2025! Photos from the event: The papers relevant to these competition submissions:

-

Demos at HWCOE celebration and dean’s tailgate

The TEA Lab and the newly formed Gator Autonomous Racing (GAR) club collaborated on two back-to-back demos: Congrats to the students on successfully demoing our autonomous racing technology!

-

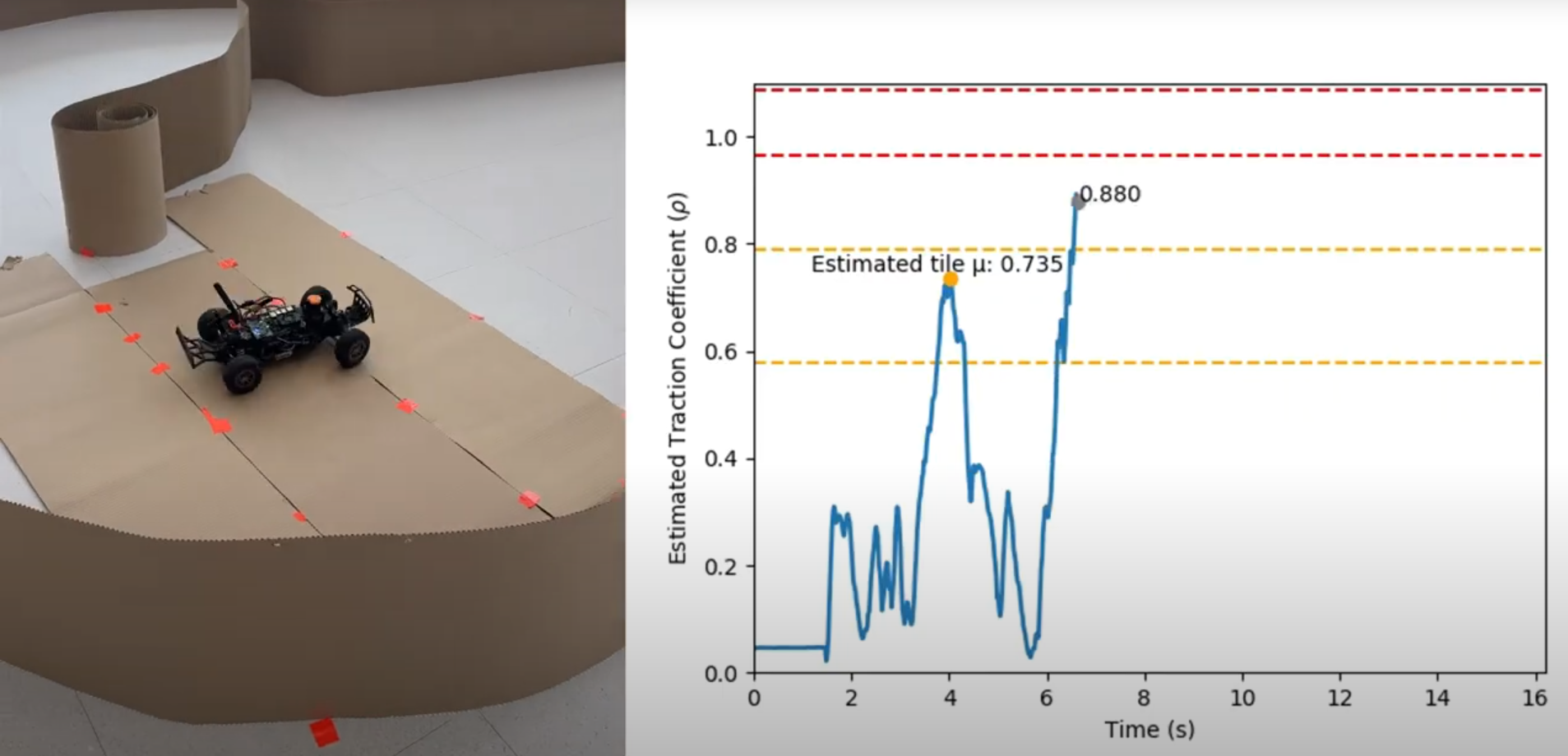

New preprint: online friction estimation for racing

Our lab pushed out an experimental project on detecting slip and estimating the tire friction from the onboard sensors (lidar & IMU) on RoboRacer (aka F1/10) cars. No fancy models, no sophisticated data collection, no need for post-processing. It turned out pretty accurate!

-

New preprint: unified V&V for vision systems

—

In collaboration with UIUC researchers, we have developed a methodology to build uncertainty-aware models (imprecise Markov decision processes) of vision-guided autonomous systems. These models offer a unified methodology for their verification (to get safety guarantees) and validation (to quantify the applicability of these guarantees to the real world). Accepted at ATVA 2025, this paper is…

-

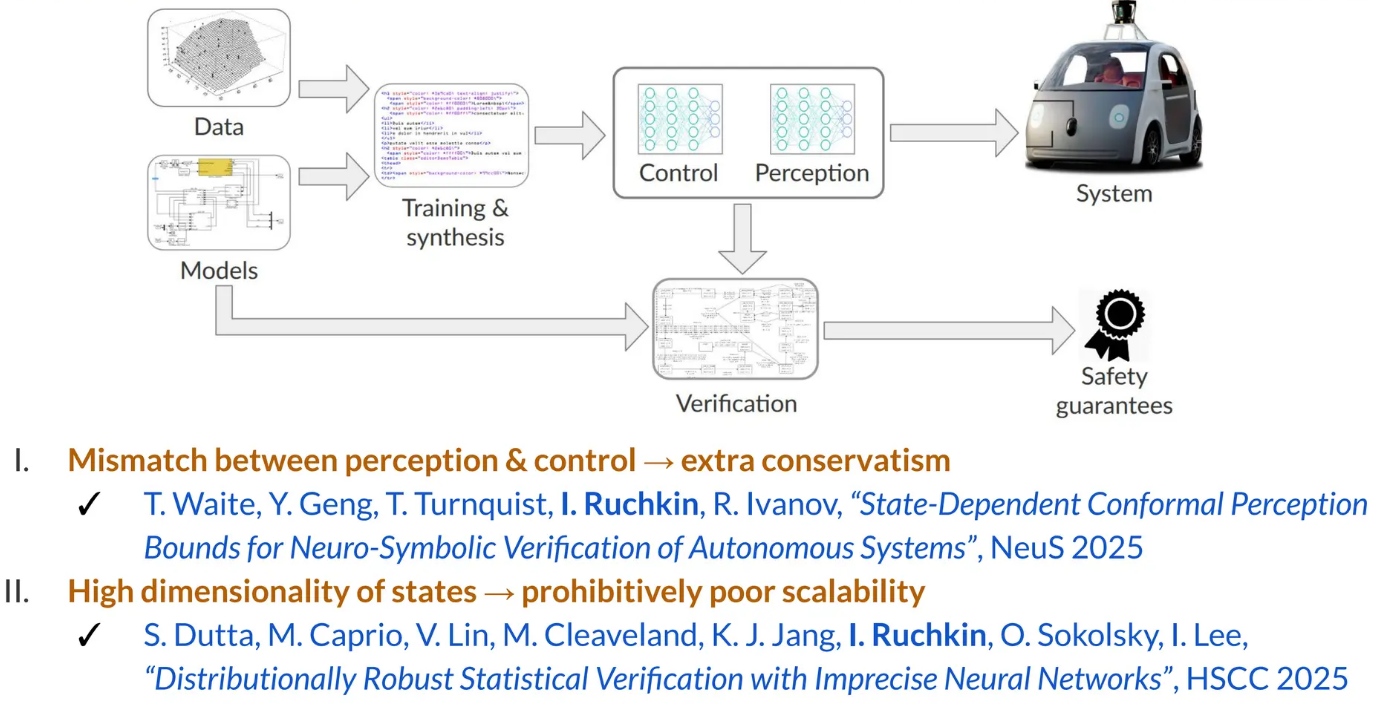

Ivan talks about high-dimensional verification at USC

The talk included methods for dealing with the high dimensionality of perception and state space. Setting a duration record for Ivan’s research talks, it lasted for 90 minutes (thanks to many insightful questions!).

-

New NSF project on verifiable safety under visual shifts

We are excited to start the VISUALS project: Verifiable Information-Theoretic Safety Under Augmented Latent Shifts, in collaboration with Yuheng Bu (UCSB) and Jose Principe (UF), sponsored by the NSF EPCN program. This project aims to create an end-to-end methodology to model, analyze, quantify, detect, and adapt to changes in the visual environment of an autonomous…