Author: Ivan

-

Demos at HWCOE celebration and dean’s tailgate

The TEA Lab and the newly formed Gator Autonomous Racing (GAR) club collaborated on two back-to-back demos: Congrats to the students on successfully demoing our autonomous racing technology!

-

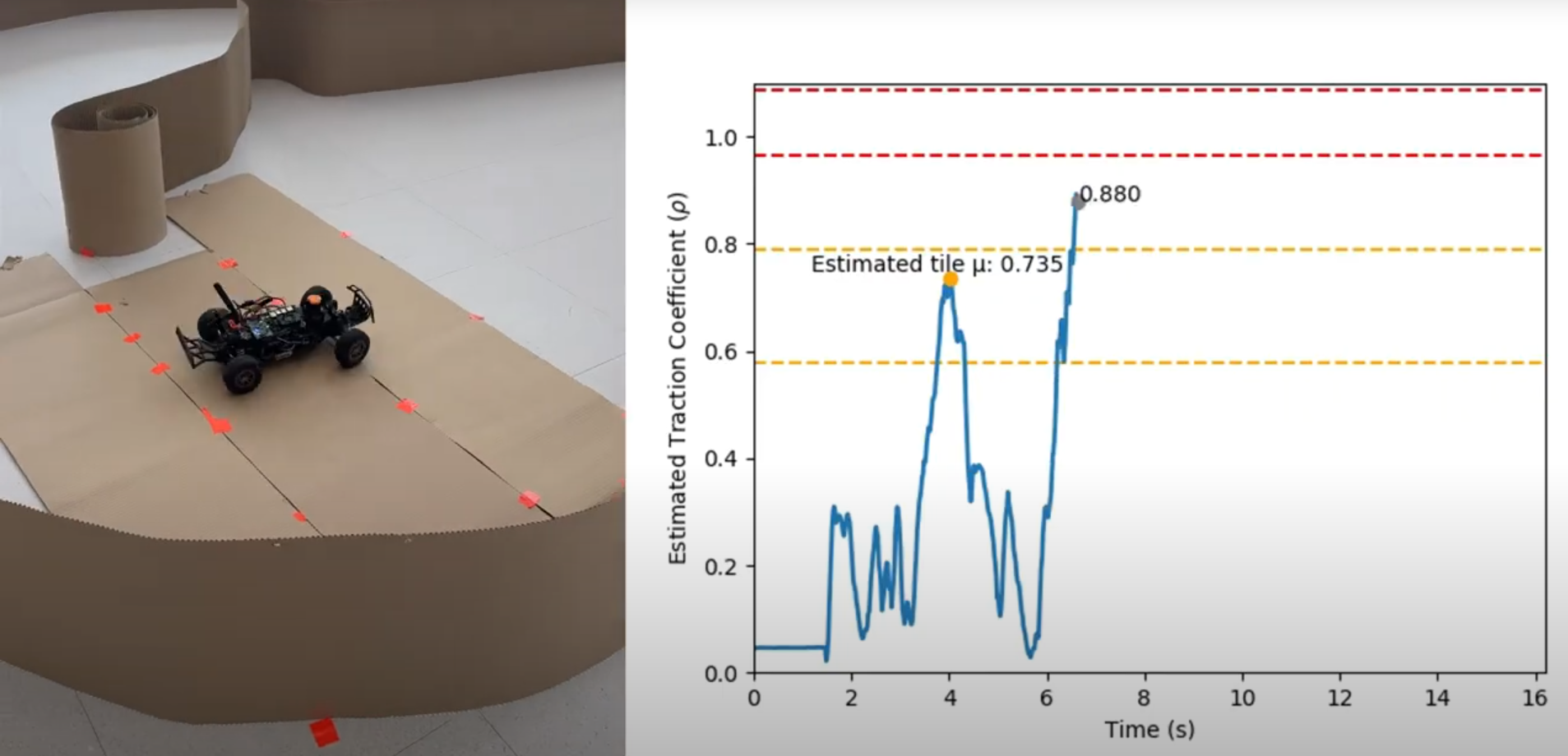

New preprint: online friction estimation for racing

Our lab pushed out an experimental project on detecting slip and estimating the tire friction from the onboard sensors (lidar & IMU) on RoboRacer (aka F1/10) cars. No fancy models, no sophisticated data collection, no need for post-processing. It turned out pretty accurate!

-

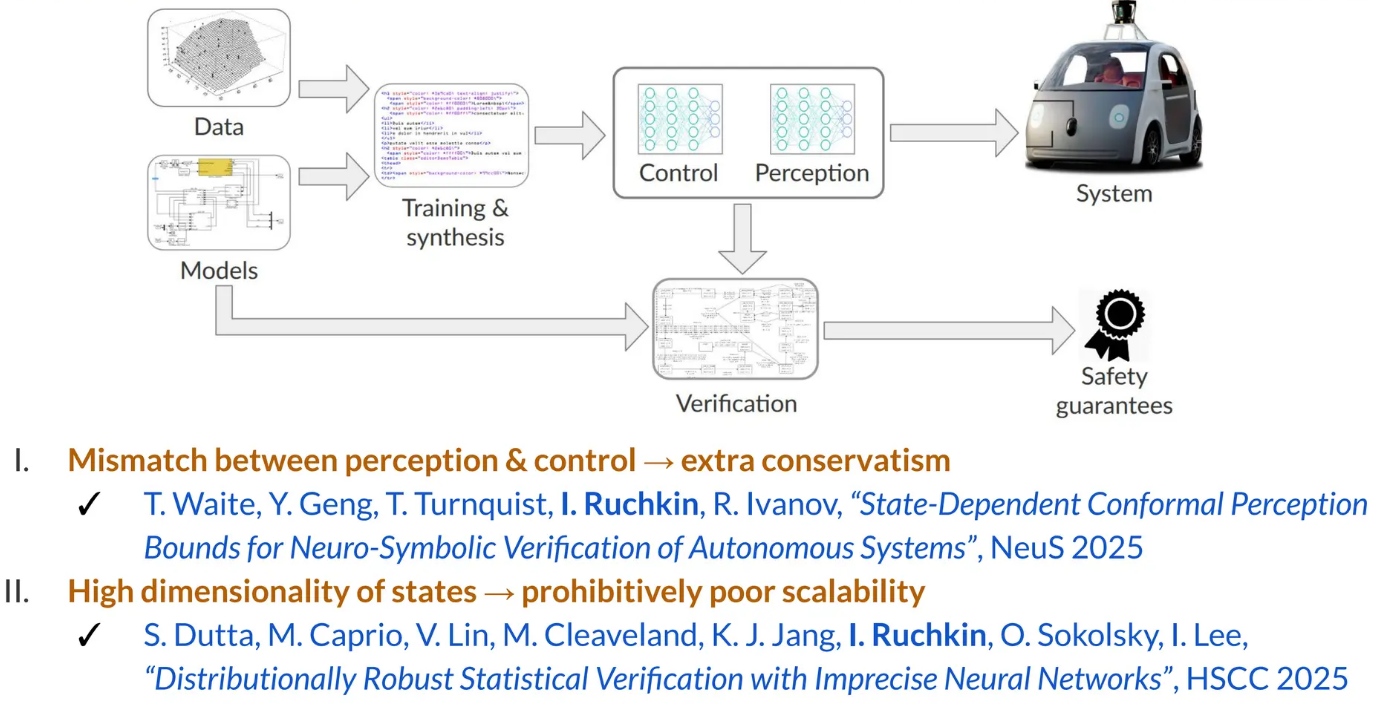

Ivan presents conservative perception abstractions at Allerton

Ivan talked about conservative abstractions of perception-driven systems at the University of Illinois Urbana-Champaign in the Allerton Conference. The rumor is that these abstractions are too conservative. Citation:

-

New preprint: unified V&V for vision systems

In collaboration with UIUC researchers, we have developed a methodology to build uncertainty-aware models (imprecise Markov decision processes) of vision-guided autonomous systems. These models offer a unified methodology for their verification (to get safety guarantees) and validation (to quantify the applicability of these guarantees to the real world). Accepted at ATVA 2025, this paper is…

-

Ivan talks about high-dimensional verification at USC

The talk included methods for dealing with the high dimensionality of perception and state space. Setting a duration record for Ivan’s research talks, it lasted for 90 minutes (thanks to many insightful questions!).

-

New NSF project on verifiable safety under visual shifts

We are excited to start the VISUALS project: Verifiable Information-Theoretic Safety Under Augmented Latent Shifts, in collaboration with Yuheng Bu (UCSB) and Jose Principe (UF), sponsored by the NSF EPCN program. This project aims to create an end-to-end methodology to model, analyze, quantify, detect, and adapt to changes in the visual environment of an autonomous…

-

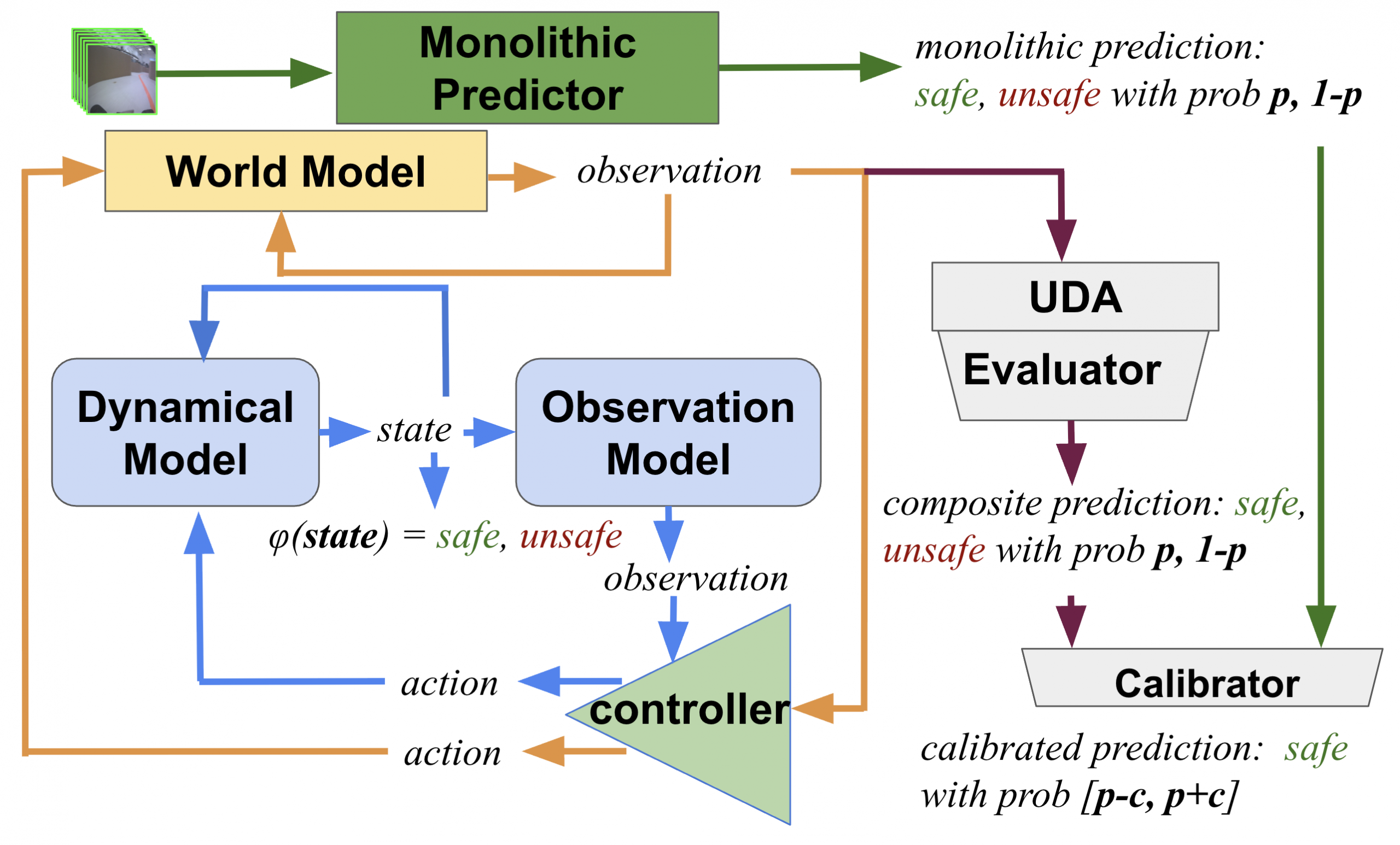

New preprint: how safe will I be given what I saw?

An extension of our modular family of learning-based safety predictors from L4DC 2024, now with transformers and quantization! Citation:

-

TEA Lab hosts incoming freshmen

This summer, the TEA Lab welcomed a group of incoming UF freshmen as part of the STEPUP (the Successful Transition and Enhanced Preparation for Undergraduates program). The visiting students learned about the importance of safe and trustworthy autonomy, got to poke around the autonomous racing cars, and asked great questions!

-

Ivan talks about conformal reachability at CAV

Ivan went all the way to Croatia to tell people how to put conformal prediction in a closed loop at the International Conference on Computer-Aided Verification (CAV). Doing so would let you verify autonomous systems with neural networks of any size (yes, even a VLA model like RT-2!). The decisive question is, to apply conformal prediction at…

-

Our world models are taking off

Our recent dive into world models is blossoming in several intriguing directions: multimodality, hallucinations, and modular verification. While we’re pushing these directions forward, take a look at a nice overview article about our research on world models.